Simulation-Based

Agent Evaluation

Generate realistic multi-turn conversations with your AI agents. Evaluate every turn. Ship with evidence, not hope.

Trusted by industry leaders

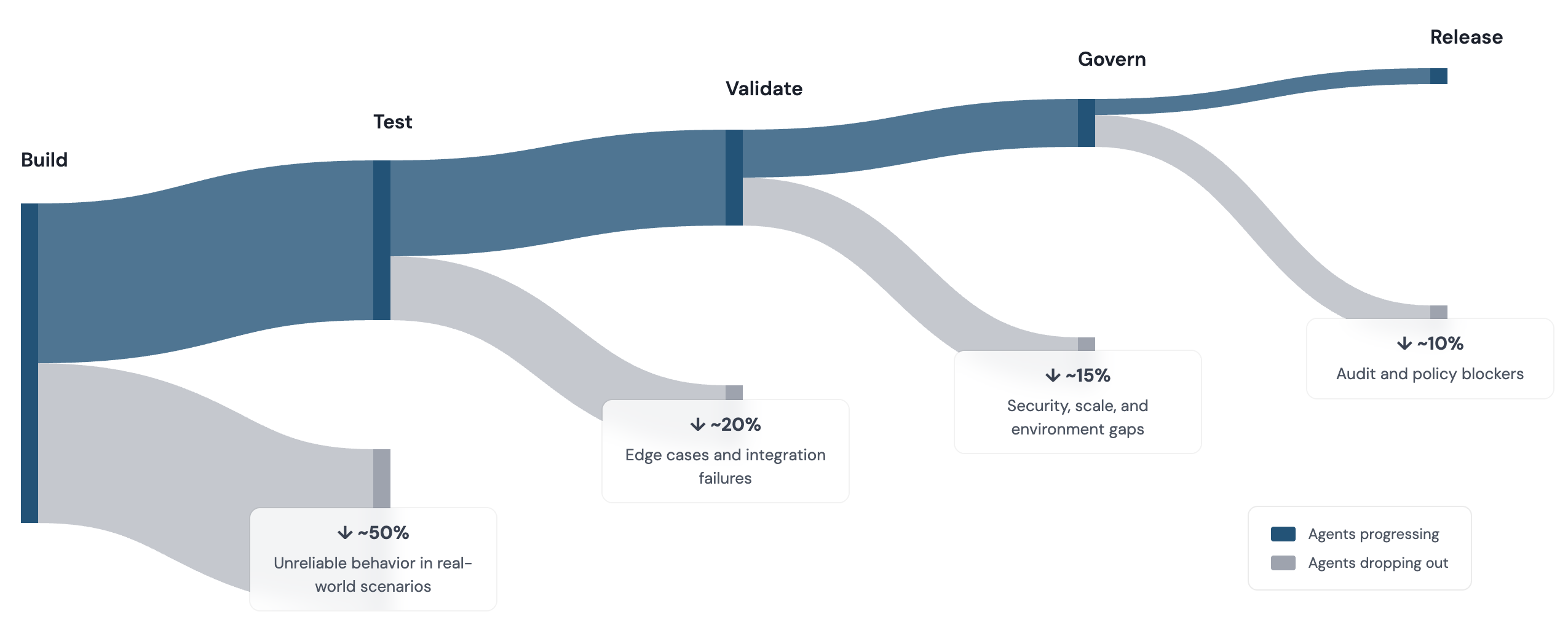

95% of AI Agents Never Make it to Production

An illustrative view of common failure points observed across enterprise AI deployments.

How It Works

Test your agents through realistic multi-turn conversations, not static benchmarks.

01

Simulate Real Conversations

Generate multi-turn interactions with synthetic users who have distinct profiles, goals, and behaviors.

03

Evaluate Every Turn

Score agent responses on helpfulness, coherence, relevance, faithfulness, and goal completion.

Agent Build Lifecycle

Arklex supports every stage of agent life-cycle.

02

Catch Multi-Turn Failures

Surface context loss, tool misuse, and policy violations that only emerge across conversation turns.

04

Govern for Confident Release

Set quality gates and readiness standards that agents must pass before reaching production.

Demo to Deployment in Minutes

See how Arklex helps teams ship AI agents faster.

Test Continuously, Deploy Confidently.

From development to production, see the transformation in your workflow.

Production Ready

Issues are identified and fixed before users encounter them.

Edge-Case Coverage

Agents perform consistently beyond standard scenarios.

Rapid Recovery

Clear signals make rollbacks, rollforwards, and fixes faster and safer.

Continuous Testing

Readiness is tested and validated 24/7, not just at release time.

Open Source

Open-source multi-turn agent testing framework

ArkSim generates synthetic users that hold realistic multi-turn conversations with your agent, then evaluates every turn. Define scenarios, simulate conversations, and catch failures before production. Works with any agent framework.

Try it now

Frequently Asked Questions

What is simulation-based evaluation?

Instead of scoring a static dataset, Arklex creates the test data for you. It generates multi-turn conversations between synthetic users and your agent, then evaluates how the agent handled each turn. The result is coverage for failure modes you would not catch with single-turn benchmarks.

How is this different from other evaluation tools?

Most tools need you to bring your own test conversations. Arklex generates them. That means you can test for scenarios that have not happened in production yet, including edge cases where users push back, change their mind, or ask unexpected follow-ups.

Why does multi-turn testing matter?

An agent can ace a single question and still fall apart in a real conversation. Context gets lost by turn five. Tool calls break when the user changes direction. The agent contradicts something it said two turns ago. These are the failures that reach production, and they only show up when you test across multiple turns.

What agents and frameworks are supported?

Any agent, any framework. If it exposes an HTTP endpoint, speaks the A2A protocol, or is a Python class, Arklex can test it. The platform handles the simulation and evaluation regardless of how your agent is built.

Can I integrate this into my development workflow?

Arklex works as a CI/CD quality gate that runs on every code change, and as a standalone platform for testing, governance, and deployment approval. Teams typically start with ad-hoc testing during development and add CI gates once they have a baseline.

Is my data secure?

Workspaces are fully isolated with separate data storage. The platform can run on your infrastructure, keeping all conversations and evaluation data in your environment. Private cloud deployment is available for enterprise customers.